No one expects the EU Inquisition

The Digital Services Act would appoint “coordinators” with an army of “trusted flaggers” to police digital speech in every EU member state

The EU’s proposed Digital Services Act (DSA) bills itself as an update to the Union’s decade-old rules governing online commerce. But its promise of “measures which have consumer protection at their core” is misleading. Behind the consumer-protection facade lies a threat to freedom of speech in Europe. First, the DSA will hand powers of online content-moderation and regulatory enforcement to the European Commission and new national regulators. There will be a platform censor or “digital services coordinator” in each of the EU’s twenty-seven member states. Second, it will grant NGOs and identitarian organisations priority consideration when they object to content which offends their “collective interests”. And third, defining digital platforms as posing a “systemic risk” to public order and democracy, the DSA will force them to report annually their own mitigation activities. To avoid massive fines, platforms will likely save government censors the trouble of ordering the removal of content, by doing so themselves.

True, the draft regulation recommends some things that online platforms really should do. They should make sure they know who is behind the third-party businesses that sell on them. It would be helpful to have a single point of contact for regulators tasked with making sure that odious content, like child pornography, is taken down. Such measures would help to create trust in the internet as a safe place to shop, search for information and socially interact.

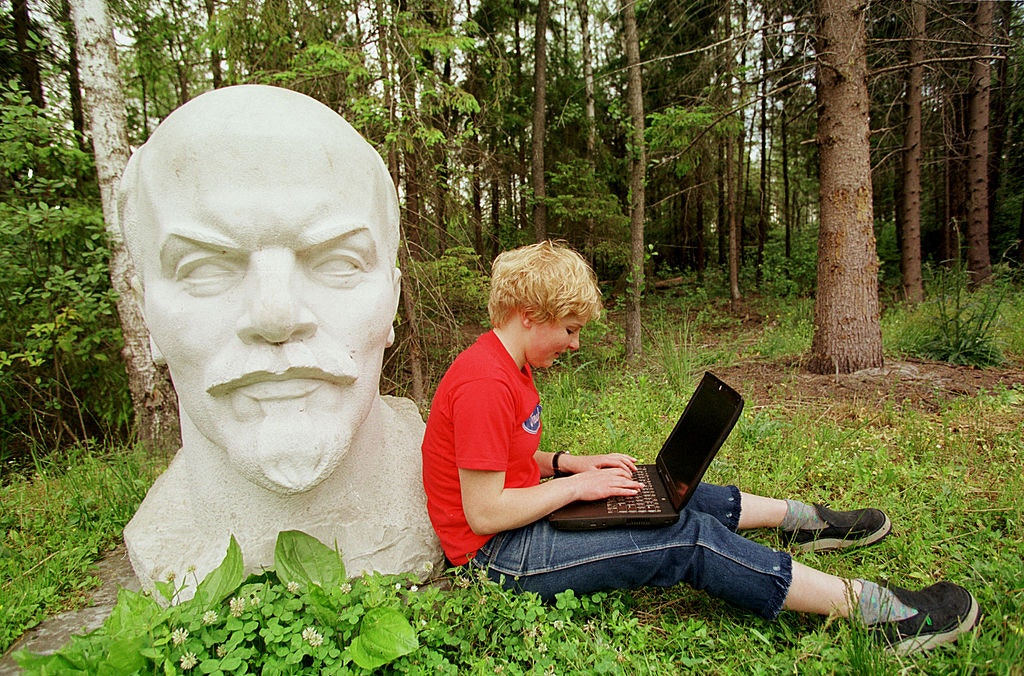

But there is no escaping the fact that the DSA will grant the powers of an 18th century monarch not just to officials, but to a cadre of “experts” and NGOs. Together, they will be able to censor online publishing and to punish the owners of digital platforms as if they were revolutionary pamphleteers.

Presumably “illegal content” will mean one thing in Berlin and another in Budapest

It is in the regulation’s vagueness that we can clarify its consequences. Starting with the evils it opposes — “illegal content” and “systemic risk” — the DSA scarcely defines them. It tells us that “‘illegal content’ should be defined broadly”, later clarifying that it will mean whatever each Member State’s digital services coordinator says it means. Presumably one thing in Berlin and another in Budapest. But at the apex of evils is “systemic risk”, to which, it says, large platforms are prone. The DSA explains systemic risk as a pandora’s box of child pornography, hate-speech, counterfeit products and content manipulation. All or any of which could threaten “public order”, “civic discourse” or even democracy itself. Large platforms will have to commission independent audits of how they manage systemic risk. This will include a forensic annual report of how they are moderating content.

It is certain that platforms will err on the side of caution and take down harmless content to avoid breaking ill-defined rules. All the more so when doing so could put them on a path to paying fines of 6 per cent of their global turnover. Currently, the DSA’s rules on systemic risk would apply only to platforms with 45 million or more users. But the European Parliament’s Internal Market and Consumer Protection Committee (IMCO) has proposed that they should apply to platforms turning over EUR 50m or more in the EU. This would be a massive expansion of the regulation’s scope, applying speech regulation to thousands of websites, including online newspapers.

There is a genuine risk that the proposal not only fails to address but which it could exacerbate. The dynamic relationship between social media platforms and their users can cause an online counterpart to what marine engineers call the “free-surface effect”: a phenomenon whereby a few inches of water on the deck of a ship can, if the rolling sea pushes it to one side, capsize it. Social media platforms can do something like this to their individual users. A single tweet can cause offence out of all proportion to what it intended or even meant. It is the rapidly-moving mass, and not the shallowness of thinly-spread outrage that overturns lives.

Licensed activists will aggravate social media’s risk

The DSA’s proposal for how illegal content should be identified could amplify this digital free-surface effect. The digital services coordinators will license “trusted flaggers” in each EU country to notify platforms about “illegal content”. Platforms will then have to give these notifications priority consideration. The criteria for licensing trusted flaggers include: that they should have “expertise…in detecting illegal content” and that they “represent collective interests”. They will likely be NGOs, humanities and social sciences institutes, think-tanks, charities, and groups representing singular identities. Who exactly will be their “collective interests”? Won’t they be motivated to please them by going after increasingly innocuous content in an ever-expanding search for speech that offends them? This regulation will encourage motivated, licensed activists to aggravate social media’s digital free-surface effect.

The DSA has two parents. The first is anxiety that, because U.S. technology companies are leaving the continent behind, a pro-Europe industrial policy is needed. The second is an already-debunked security imperative: “fake news” and algorithmic manipulation corrupted recent U.S. and EU plebiscites, and this must not happen again. It’s worth remembering that every major technological shift in history has been accompanied by self-justified extensions of government control over the new media it spawns. What was true for printing in the 15th and broadcasting in the 20th centuries is just as true for social media today. Make no mistake, the DSA is about regulating the speech of Europe’s citizens.

Enjoying The Critic online? It's even better in print

Try five issues of Britain’s newest magazine for £10

Subscribe